Chicago Voice AI Intake

A commercial voice-powered AI intake assistant that handled family law calls over a three-week pilot at Legal Aid Chicago, triaging callers, flagging domestic violence priority cases, and reducing out-of-priority screening outcomes.

Project Description

Legal Aid Chicago receives over 78,000 calls per year: roughly 6,500 per month. Staff can't screen, qualify, and refer everyone who calls. Callers hear the queue is closed. People who need help don't get through.

Haven deployed a voice-powered AI assistant on Legal Aid Chicago's family law intake line. Callers with family law concerns were routed to the AI agent, which conducted a conversational screening, collected information, assessed eligibility against Legal Aid Chicago's specific criteria, and either flagged the caller for staff follow-up or provided referrals to appropriate organizations.

The pilot ran for three weeks starting August 11, 2025. During that window, the system handled 417 calls totaling 22+ hours of conversation. Average call length was 3.24 minutes: this is screening and triage, not full detailed intake. Of those 417 calls, 281 were screened out (with referrals provided), 136 were screened in for additional review, 44 were flagged as priority intakes for domestic violence survivors seeking help with divorce or custody, and 20 received specialized handling for urgent cases (court dates within a week).

The AI talked to hundreds of people who otherwise would have been told the queue was closed.

How It Works

Haven is a commercial product, so the full technical architecture is proprietary. What's publicly documented:

Voice AI agent conducts conversational intake over the phone. Right now the testing has been in English language only. The AI uses what Haven describes as "empathetic tone models" and "attorney-reviewed scripts."

Integration with existing phone infrastructure. Haven's phone number connected directly to Legal Aid Chicago's system, routing family law calls to the AI assistant. No infrastructure changes were required on Legal Aid Chicago's end.

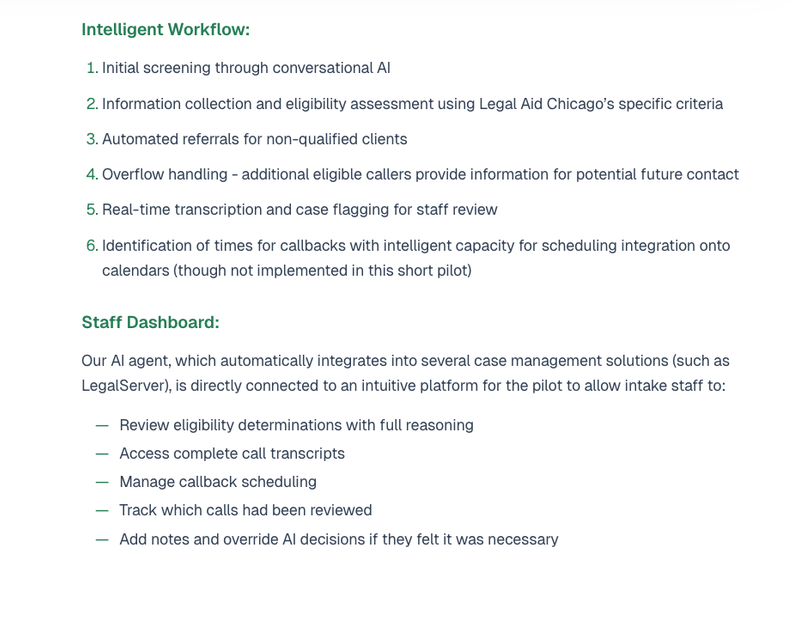

Staff dashboard. Intake staff can review eligibility determinations with full reasoning, access complete call transcripts, manage callback scheduling, track which calls have been reviewed, and add notes or override AI decisions.

Case management integration (possible, forthcoming). Haven has possible integration with LegalServer and other case management systems, automatically uploading intake information collected during calls.

Security. Traffic encrypted via HTTPS (TLS 1.3), role-based access controls, PII stored minimally and never used to train AI models.

What the Pilot Found

Screening accuracy improved significantly. With traditional phone triage, 46% of family law calls were ultimately rejected as out-of-priority after intake staff had gathered more information. Haven's AI agent reduced that rate to 21%. The AI was better at identifying priority legal issues upfront.

Clearer 'no' responses to those who don't qualify. AI agents seemed to perform better than humans at telling people they did not qualify for help during the triage process. This can reduce the time humans spend talking to people they cannot serve, clarifying this decision.

Domestic violence detection. The structured AI questioning revealed high-risk situations that callers didn't self-identify through phone menu options. In one documented case, the voice agent identified a stalking situation with retaliation concerns. The caller was flagged for urgent attention and received a same-day callback.

Caller satisfaction was high, among those who replied. The satisfaction survey was limited to applicants who made it through screening and received a callback from intake staff. This follow-up survey found an average rating of 4.1 out of 5. 55% of respondents rated their experience 5/5, and 24% rated it 4/5. Callers described the experience as "clear," "easy to speak to," and "just like a live person."

People disclosed sensitive information to the AI. This contradicted a common assumption in the field. Callers were surprisingly comfortable describing abuse details and sensitive situations to the AI agent.

Mid-pilot redesign. Halfway through, Legal Aid Chicago realized their traditional intake was screening too many people as eligible. Haven helped redesign both the questions and the entire intake process, producing a more efficient system. This is a notable finding: the AI pilot surfaced problems with the human workflow that hadn't been visible before.

Implementation required 2–3 iteration cycles. Haven worked through multiple versions of the conversational flow and eligibility criteria with Legal Aid Chicago's team. Refinements happened via email and short calls. This is reported as typical for new Haven implementations.