Eviction Notice Defect Detector

A tool that lets legal aid staff upload a 3-day eviction notice, automatically flags procedural and substantive defects under California law, and generates a draft Eviction Answer response form: reducing a 30-minute manual review to under five minutes.

Project Description

What It Does

A tenant walks into a California court clinic or legal aid office with an eviction (UD) lawsuit and the 3-day eviction notice they had received before the lawsuit. Staff need to do three things fast: check whether the notice has legal defects that could form the basis of a defense, interview the tenant, and fill out a UD-105 response form (often 30+ pages with attachments). All of this under a tight statutory deadline.

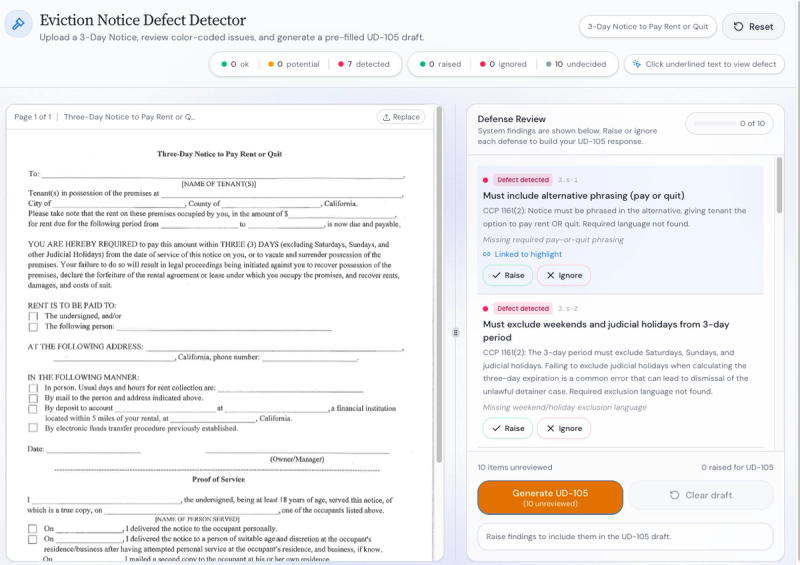

The Eviction Notice Defect Detector handles the first and third steps. A staff member uploads the notice as a PDF. The system extracts key fields (landlord name, rent amount, service date, notice period, payment instructions, forfeiture language) and runs them against a checklist of nine California-specific defect types. The results appear in a split-screen interface: the original notice on the left with color-coded annotations, and a defect checklist on the right. For each flagged defect, the user clicks "Raise" or "Ignore." Raised defects automatically map to the correct sections of a draft UD-105 response form, which the tool generates as a downloadable PDF.

The nine defect types the tool checks:

- Missing disjunctive "pay OR quit" phrasing

- Insufficient notice period (fewer than 3 business days)

- No exact dollar amount stated

- Missing payee information

- Missing payment hours/location

- Missing financial institution details

- Missing "previously established" language for electronic payment

- Rent claimed is over one year old

- No forfeiture declaration

Who It's Built For

The team designed around two user personas drawn from the legal aid team's actual staff.

Lawyer John is a public-interest attorney handling a high volume of eviction cases. He needs speed and accuracy: flag the defects, draft the response, move to the next case.

Volunteer Val conducts intake without formal legal training. She needs the tool to explain what each defect means in plain language, make the required action obvious, and produce outputs that an attorney can quickly validate.

A key design decision: the team rejected a chatbot-style interface. In eviction defense, long text summaries reproduce the information overload that already bogs down volunteers. Instead, the interface is built around the annotated document itself. Click a defect in the checklist, and the corresponding text highlights in the PDF. Click an annotation in the PDF, and the checklist scrolls to the matching item.

How It Works (Architecture)

The current prototype uses a rules-first, AI-assisted approach.

PDF text extraction pulls readable text from the uploaded notice. The system works best with born-digital PDFs; scanned documents and photos are a known limitation.

Rule-based defect detection runs nine deterministic checks against extracted fields. This approach was chosen deliberately to avoid sending sensitive tenant data to external AI providers. It handles the majority of defect types reliably: simple string-presence checks (is "OR" in the demand phrase? is a dollar amount present?) achieve perfect precision and recall.

LLM-based extraction (Google Gemini) was tested as an alternative pathway and achieved 73.3% exact-match accuracy on the benchmark dataset, compared to 20% for the regex-only approach. The improvement is concentrated in defects that require date parsing, context-sensitive field detection, and interpreting varied prose. The team has not yet integrated LLM extraction into the production prototype because of data privacy concerns, but identified three paths forward: self-hosted open-source models (Llama 3, Mistral, Qwen), zero-data-retention agreements with commercial providers, and secure inference solutions like Tinfoil AI.

UD-105 generation maps raised defects to the correct form sections and produces a pre-populated PDF. The attorney reviews and edits before filing.

What They Tested

The team built a synthetic evaluation dataset of 15 eviction notices (7 valid, 8 invalid with 1–3 defects each), generated both programmatically and via LLM to cover varied language. Each notice carries ground-truth defect labels. The evaluation computes per-defect precision, recall, and F1 scores, plus overall exact-match accuracy.

Key findings from the benchmark:

The regex pipeline scores perfectly on structurally simple defects (disjunctive phrasing, dollar amount presence) but fails completely on date-dependent defects (notice period, rent age) and generates false positives on context-sensitive fields (payee info, payment hours). Overall: 20% exact match.

The LLM pipeline resolves the date-parsing and context-sensitivity failures. Overall: 73.3% exact match. The remaining gap is concentrated in forfeiture declaration detection (defect 9).

The team also built a usability evaluation rubric covering five dimensions: first impression and learnability, PDF viewer behavior, defect checklist clarity, UD-105 output quality, and efficiency under real conditions (target: full review in under 5 minutes).

Design Decisions Worth Noting

"Raise" vs. "Ignore" instead of a checklist. Early versions used a standard checklist format, which confused users about whether they were confirming a defect or dismissing it. The team replaced this with explicit action buttons after user testing.

Defenses scoped to the face of the notice only. Defenses that depend on tenant-specific facts (habitability conditions, payment disputes, landlord retaliation) were excluded from the main checklist. These require an interview and cannot be inferred from the document. They are flagged in a secondary section for future development.

No data storage. The prototype processes notices on a per-session basis. No tenant data is archived within the system.

Dual-use risk acknowledged. The team flagged that the same defect-detection logic could be used by landlords to strengthen their notices. Future deployment decisions should account for this.

What's Next

The team will continue to work towards getting the prototype in shape for actual piloting. If the tool can be strengthened & with appropriate supervision and protectiosn, it can integrate into the legal aid's actual intake workflow, with volunteers using it for document review and attorneys validating outputs.

LLM extraction integration as a modular upgrade, tested alongside the existing regex pipeline. Priority: self-hosted or zero-data-retention approaches.

Expanded benchmark from 15 to 50–100 synthetic notices with broader defect co-occurrence patterns.

Interview support tab that guides the structured tenant interview and maps responses to UD-105 sections that cannot be inferred from the notice alone.

Voice-based intake extension allowing tenants to respond to structured prompts remotely.

Why It Matters for the Field

This project demonstrates a pattern that applies well beyond San Diego eviction defense. The 80/20 insight: deterministic rule-based checks handle the structurally simple defects perfectly, and AI fills in where rules break down (dates, context, varied language). The team didn't start with AI and add rules. They started with rules and added AI only where the rules failed, with clear benchmarks showing exactly where each approach succeeds and fails.

The evaluation methodology is also replicable. Synthetic notice generation with known ground-truth labels, per-defect precision/recall scoring, and a separate usability rubric give other teams a template for testing similar document-review tools.

The annotated-document interface pattern (PDF on the left, actionable checklist on the right, bidirectional linking between them) could apply to any legal document review workflow: debt collection letters, benefits denial notices, court forms.