AVA: Alaska Virtual Assistant for Probate

A court-built AI chatbot that answers public questions about Alaska's probate process, grounded in the Alaska Court System's self-help webpages.

Project Description

Organization: Alaska Court System, with technical development by LawDroid and support from the National Center for State Courts (NCSC)

Status: Pilot (launched in early 2026 after 15+ months of development)

Jurisdiction: Alaska (statewide; probate only)

Issue Area: Estates / Probate

Live: https://courts.alaska.gov/shc/AVA/ava.htm

What It Does

When someone dies in Alaska, their family often has to navigate probate: the court process for authorizing someone to distribute the estate. The forms are confusing, the procedures are unfamiliar, and most people going through it are grieving. Alaska's court system runs a family law helpline staffed by court employees who provide free guidance on legal matters. The administrative director, Stacey Marz, wanted to build something similar for probate: a chatbot that could provide the same self-help experience as talking to a human facilitator.

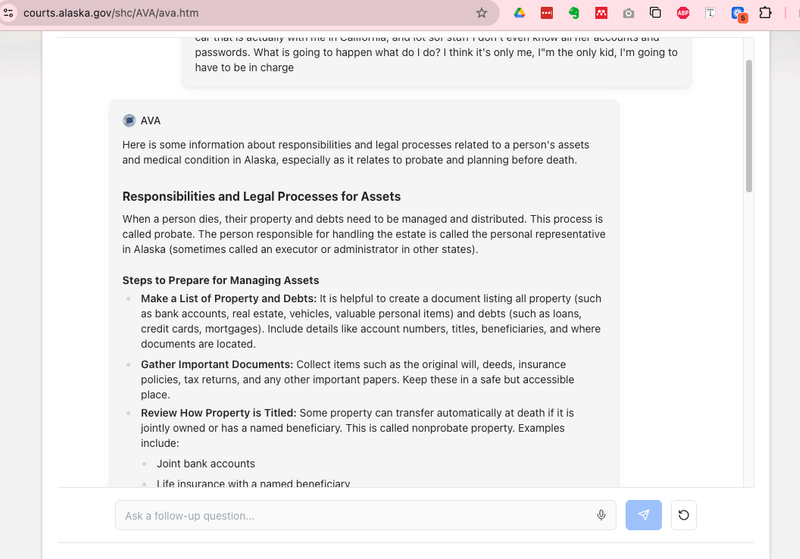

AVA answers questions about types of probate cases, wills and trusts, personal representative responsibilities, distributing personal property without an estate case, and which court forms to use. Every answer includes links to the court's self-help probate webpages that AVA relied on, so users can verify the information. AVA is free, available 24/7, and scoped exclusively to Alaska probate law. It cannot give legal advice, predict case outcomes, file documents, review uploaded documents, or answer questions about other states or other areas of law.

How It Works

AVA is built by LawDroid. The chatbot uses generative AI grounded in the Alaska Court System's probate web content. It does not search the wider web. The system is designed to reference only the court's own published self-help materials.

The technical details of the underlying model are not fully public, but the NBC News reporting indicates AVA has been tested across multiple models in the GPT family, with the team selecting for rule-following behavior over models that "want to prove they're the smartest guy in the room." Cost is low: under one configuration, 20 queries cost about 11 cents.

Users interact through a web-based chat interface. They agree to terms upfront (no personal information, AI may make mistakes, check the linked webpages). The court retains transcripts of questions and answers to improve AVA and may partner with outside organizations to audit answer quality.

What Made This Hard

AVA was supposed to be a three-month project. It took over 15 months.

Hallucinations. Regardless of which model the team used, AVA generated false information. When asked "Where do I get legal help?" it once responded that there's a law school in Alaska and suggested looking at the alumni network. There is no law school in Alaska. The team worked extensively to constrain the chatbot to the court's own knowledge base and prevent wider web searches.

Accuracy standards in a high-stakes domain. Marz drew a sharp line: "If people are going to take the information they get from their prompt and they're going to act on it and it's not accurate or not complete, they really could suffer harm. It could be incredibly damaging to that person, family or estate." The team held AVA to a higher standard than a typical minimum-viable-product launch.

Tone calibration. Early versions were too empathetic. User testing revealed that people actively grieving didn't want another entity saying "I'm sorry for your loss." The team stripped out condolences. From an AI chatbot, you don't need one more.

Evaluation was labor-intensive. The team designed a 91-question evaluation set on probate topics. It proved too time-consuming to run and evaluate with human review, so they refined it to 16 questions: some AVA had previously answered incorrectly, some complex, some basic and frequently asked.

Continuous maintenance required. Because the underlying models change with new versions and updates, the team expects to regularly monitor AVA for behavioral or accuracy changes, update prompts, and adapt when models are retired. This is not a build-once-and-deploy system.

Goals shifted. The original vision was to replicate what human facilitators do at the self-help center. The team scaled back: they weren't confident the chatbot could work at that level because of accuracy and completeness issues. The current version provides information and links to authoritative sources rather than attempting to fully replace the human facilitator experience.

Design Decisions Worth Noting

Single knowledge source. AVA only provides information from the Alaska Court System's own webpages. It has been instructed not to consult external sources. Every answer links back to the specific pages it relied on. This is both a quality control measure and a transparency mechanism.

No document upload. Users cannot upload wills, forms, or other documents for AVA to review. This prevents the system from encountering PII and avoids the much harder problem of document-specific legal analysis.

No personal information request. Users are explicitly instructed not to share names, addresses, SSNs, or financial information. The court retains question-and-answer transcripts for quality improvement.

Jurisdiction-scoped. AVA knows about probate in Alaska and nothing else. If you ask about another state or another area of law, it says it can't help and directs you to other self-help resources.

Model personality selection. The team deliberately chose model configurations that prioritize rule-following over expressiveness. For a legal application, you want compliance and plain-language explanation, not creative elaboration.

What's Public

The FAQ, the live chatbot, the NBC News article (January 3, 2026), and the NCSC's involvement are all publicly documented. The evaluation methodology (91-question set refined to 16) is described but the evaluation results are not published. The court has indicated it may partner with outside organizations to audit answer quality and publish reports.

Why It Matters for the Field

The accuracy-versus-deployment tradeoff is a central lesson. The court explicitly rejected the "minimum viable product" approach that works for other technology projects. In a domain where people act on what they're told, incomplete or inaccurate information causes real harm. The team chose to delay launch by a year rather than release a chatbot that might mislead grieving families.

The tone calibration finding (stripping out empathy after user testing) is a concrete, replicable design insight for any team building client-facing legal AI. What seems like good UX design (expressing sympathy) can actively annoy users in crisis who just want answers.

The evaluation bottleneck (91 questions was too many to evaluate with human review) illustrates a problem every legal AI project will face: rigorous evaluation is labor-intensive, and teams must find the minimum viable evaluation set that still catches critical failures. The refined 16-question set could become a template for other court chatbot evaluations if published.