Reasonable Accommodation Demand Letter Generator

A voice-enabled AI agent ("Sofia") interviews tenants with disabilities by phone in English or Spanish, collects the facts needed for a Fair Housing Act reasonable accommodation demand letter, and generates a draft for attorney review: replacing a multi-step, multi-interview workflow with a single structured phone call.

Project Description

What It Does

A tenant with a disability calls Legal Aid of San Bernardino because their landlord is ignoring their request for a reasonable accommodation: maybe they need an exception to a no-pet policy for an emotional support animal, or they need their rent due date moved because their disability income arrives late in the month, or they need a closer parking spot. Under the Fair Housing Act, landlords must provide reasonable accommodations. A well-drafted demand letter often resolves these disputes without a formal complaint to HUD or the DOJ.

The problem is the workflow. LASSB's current process requires two separate interviews: first a paralegal conducts intake, then an attorney conducts a second detailed interview to gather the facts needed for the letter, then the attorney drafts it. This multi-step process takes significant staff time per case, and LASSB's small team can't keep pace with demand.

The Demand Letter Generator replaces the second interview and the drafting step with a single AI-conducted phone call. Here's how it works:

Step 1: Paralegal creates the case. During the normal intake call, the paralegal enters the client's information into LegalServer (LASSB's case management system). On the attorney dashboard, the paralegal inputs the LegalServer client ID. The system pulls client and landlord details (names, addresses, email) via API and generates a 4-digit PIN. The paralegal gives the PIN and Sofia's phone number to the client.

Step 2: Client calls Sofia. The client dials in, chooses English or Spanish, enters their PIN (which links to their pre-loaded case data), and begins a structured interview. Sofia asks about the landlord rule or practice causing difficulty, the client's disability, how the disability interacts with their living situation, what accommodation they need, and the timeline for a response. Sofia asks one question at a time, confirms each piece of information before moving on, and speaks in a trauma-informed, empathetic tone. Sofia never diagnoses disabilities and never provides legal or medical advice.

Step 3: Letter generation. Once the interview is complete and the client confirms the information, the system generates a formatted demand letter using GPT-5.1, citing the Fair Housing Act, California FEHA, and Civil Code §54.1. The letter follows a standardized eight-section structure: Date/Header, Salutation, Opening, Disability Paragraph, Accommodation Request, Legal Basis, Documentation/Deadline, Closing. The letter goes directly to the attorney dashboard. The client never sees it.

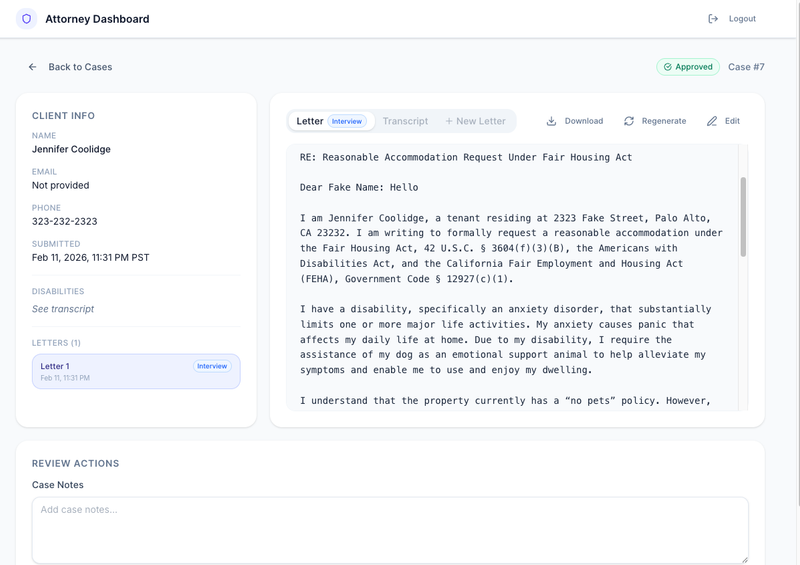

Step 4: Attorney review. Attorneys log into the dashboard, see a queue of cases with status indicators, and can review each generated letter alongside the full interview transcript. They can edit the letter, regenerate it with updated information, add case notes, and approve or reject before sending to the landlord. After review, the attorney calls the client to confirm details and discuss next steps.

The Two-Model Architecture

The system uses two different models for two different jobs:

GPT-4.1-mini powers the phone conversation. It's lightweight and fast, which matters on a phone call where silence feels much longer than in a chat window. The system prompt constrains Sofia to one or two short sentences per response.

GPT-5.1 generates the demand letter. The team kept the most powerful model for the highest-stakes output, where legal accuracy and professional quality matter most.

The PIN System and Its Failsafes

Dictating names, addresses, and email addresses over the phone is error-prone, especially through speech-to-text. The team's solution: don't do it. The PIN system pulls logistical details from LegalServer so the phone call only handles the conversational portions where voice works well.

Two failsafes protect against failure. If the LegalServer API call fails when the paralegal creates the case, the paralegal can manually enter the information on the dashboard. If the client calls Sofia and the PIN doesn't match or has expired (PINs last 24 hours), Sofia falls back to collecting the logistical information by voice.

Bilingual Support

Sofia detects language preference at the start of the call and conducts the entire conversation in the caller's chosen language. The final demand letter is always generated in English, as required for legal purposes.

What They Tested

The team tested across two rounds. Round I used the text-based chatbot (before phone integration) across multiple use-case scenarios: waivers of no-pet policies, waivers of pet fees, waivers of income requirements, requests to move rent due dates, requests for closer parking spots, and requests to submit forms in alternative formats. Each scenario was tested with cognitive, mobile, and optical impairments.

Round II tested the voice-call version end-to-end, including letter generation into the attorney dashboard. This round revealed that phone integration introduced new issues: the chatbot sometimes pushed clients toward naming a specific diagnosis, misunderstood ambiguous answers about medical documentation, and occasionally cut portions of saved letters on the dashboard.

The evaluation benchmark consists of four sample demand letters provided by LASSB (de-identified). The team built rubrics covering three components: the chatbot conversation (tone, structure, usability), the generated letter (content accuracy, legal citations, structure, grammar), and the attorney dashboard (data accuracy, navigability, editability, export capability).

Key findings from testing:

Letter quality improved significantly between rounds after the team restricted legal citations to FHA and FEHA only (removing ADA references), suppressed explicit naming of mental health diagnoses in favor of general language ("a disability that substantially limits one or more major life activities"), and added a clear accommodation request statement at the top of each letter.

Phone integration introduced latency and accuracy challenges. Twilio's speech-to-text struggled with names, addresses, and emails, which led to the PIN-based architecture. The team also discovered that the voice chatbot did not work for all phone carriers (AT&T users experienced connection failures, still under investigation).

The chatbot's tendency to push for diagnosis persisted even after explicit instructions not to. The model nudges clients toward naming a condition rather than accepting symptom-level descriptions. This is flagged as a priority fix for future iterations.

Design Decisions Worth Noting

Client never sees the letter. An earlier version showed the generated letter to the client before attorney review. The team removed this after LASSB expressed concern about unsupervised AI work products reaching clients who might forward them to landlords. Attorney review now happens first, followed by a phone conversation with the client to confirm details.

No real client data in training. The team deliberately avoided using actual client letters as training data, relying only on de-identified example letters provided by LASSB. This limits letter quality (especially for uncommon accommodation types) but preserves client confidentiality.

Mental health diagnosis suppression. When a client's disability involves anxiety, depression, or other mental health conditions, the generated letter uses general statutory language rather than naming the diagnosis. The client can decide later whether to disclose if the landlord requests further information.

Replit as deployment platform. The prototype runs on Replit, which introduces data security questions for production use. The team recommends a cybersecurity audit before deployment and notes that Replit Pro may integrate with LASSB's Azure for Business infrastructure.

Risks and Limitations

Data security. Client PII currently lives in Replit's database. The team does not have full visibility into Replit's architecture or vulnerability profile. Production deployment requires moving to a more controlled environment.

Equity concerns. Clients who understand the legal system better will provide clearer information, potentially producing higher-quality letters. Clients from marginalized backgrounds, non-native English speakers, or those without medical documentation may receive weaker drafts. Attorney review mitigates this but doesn't eliminate it.

Limited benchmark data. Four sample letters is a small training set. The model performs well on common scenarios (emotional support animals) but quality is untested across the full range of accommodation types LASSB encounters.

Spanish capabilities not fully tested. The team confirmed the Spanish-language interview asks the same questions, but has not directly tested how well Spanish-language answers translate into the English-language letter.

What's Next

The team recommends future development focus on:

Continued testing of the voice chatbot's conversation quality, particularly the diagnosis-pushing behavior and Spanish-language capabilities. Deeper integration with LASSB's LegalServer for case tracking and document storage. Expansion from reasonable accommodations to reasonable modifications (physical changes to premises, like grab bars or wheelchair ramps), which require different legal framing. Technical audit of Replit's data security before any production deployment. Expansion of the benchmark dataset beyond four example letters.

Why It Matters for the Field

This project demonstrates a pattern for automating structured legal interviews and document generation for high-volume, formulaic legal tasks. The key architectural choices are instructive:

The PIN-based handoff between human intake and AI interview solves a real problem: voice-to-text is unreliable for proper nouns and addresses, but those details are already collected during intake. Don't re-collect them. Pull them from the case management system.

The two-model strategy (lightweight model for real-time conversation, powerful model for document generation) is a practical approach to the latency problem in voice AI.

The client-never-sees-the-letter decision reflects a governance pattern that other legal aid organizations deploying AI document generation will face: where in the workflow do you gate human review, and what happens if an AI-generated legal document reaches a client or opposing party without attorney sign-off?

The demand letter use case itself is highly replicable. Reasonable accommodation letters follow a standard structure, cite the same statutes, and vary primarily in the facts of the client's situation. Any legal aid organization handling FHA accommodation requests could adapt this approach. With adjusted legal citations, it could extend beyond California.